By Andreas Roessler, BackITapp Consulting and Emil Olbrich, Primelime

The history of mobile networks shows that spectrum decisions shape both technical progress and business outcomes. In 5G, millimeter-wave frequencies (mmWave or FR2) were marketed as revolutionary, only to stumble when physics and deployment economics caught up. As we look toward 6G, FR3 — the upper midband between 7.125 and 24.25 GHz — has become the prime candidate. The question is simple but loaded: will FR3 succeed where FR2 faltered, or is the industry setting itself up for the same cycle of hype and disappointment?

FR2/mmWave in 5G: Promise, Hype, and Early Wins

A major innovation in 5G was the global adoption of mmWave frequencies, formally defined in standards as Frequency Range 2 (FR2). It was positioned as a solution to the looming spectrum crunch caused by the rapid growth of mobile video streaming, and social media traffic — all straining existing 4G LTE networks.

Academic researchers had been working since the early 2010s to prove mmWave’s technical feasibility. Ultimately it was the business pressure that service providers faced that gave the idea momentum. But the underlying motivations still diverged by market. In the United States, operators targeted the “last mile” with Fixed Wireless Access (FWA) based on mmWave to avoid continuing the costly buildout of Fiber-To-The-Home (FTTH). Thus, Verizon Wireless’s 5G Home launched on Oct 1, 2018, in four cities using mmWave. It relied on a proprietary 5G specification derived from 3GPP’s LTE Release 12, with added support for 28 GHz and beamforming. This move gave the provider the chance to accelerate learning from an actual deployment while offering a wired-broadband alternative.

In South Korea, the initial driver was not FWA. Korean Telecom (KT) led a national 28 GHz pre-commercial initiative, leading to the 2018 Winter Olympics Pyeongchang showcase, that built on its 5G-SIG/5G-DF program. The demonstration included multi-view “Time Slice,” first-person “Sync View,” 360° VR, and a 5G autonomous bus, all running on a dense trial network at Olympic venues. This “5G Olympics” was explicitly designed to prove readiness and pull forward 5G commercialization into 2019. In short, mmWave entered 5G through different doors: FWA in the US and high-visibility demonstrations in Korea.

And regulators kept pace. In the U.S., the Federal Communications Commission (FCC) began in 2016 to establish new rules and seeking comments and feedback from the industry. These regulatory efforts led to subsequent auctions in 2019 and 2020 for 28, 24 and 37/39/47 GHz bands (Auction 101, 102, and 103) supplying operators with mmWave spectrum for additional trials and early rollouts. Globally, the World Radio Conference 2019 (WRC-19) harmonized spectrum in those frequency bands and added 66 to 71 GHz for IMT services, reinforcing the narrative that mmWave would be 5G’s cutting edge. These decisions came just months after South Korea launched the world’s first 5G services in April 2019, and Verizon switched on its initial mmWave-based 5G networks in Chicago and Minneapolis.

From breakthrough to breakdown: Why did FR2 stumble?

Fast forward to today, and the reality looks very different from the early promise. Fixed Wireless Access (FWA) has indeed become the dominant commercial use case for 5G. But the backbone of those deployments is not limited to mmWave. Operators rely on the full range of spectrum assets, including FR1, to connect homes and businesses.

Experience confirmed the limits many feared: high path loss, foliage, and material penetration issues made coverage far harder than trials suggested. Deployments required non-co-located sites instead of reusing existing macro infrastructure. That approach drove capital expenditure sharply higher and eroded the business case.

As a result, operators worldwide shifted their focus. Initial rollouts leaned heavily on C-Band 3.5 GHz, which became the global anchor band for 5G New Radio (NR). In contrast, mmWave deployments lagged. Because of the slower than expected FR2 build out, service providers failed to meet licensing conditions tied to FR2, such as covering a minimum share of the population or deploying the required number of base stations. In South Korea, the regulator revoked all three Tier-1 operators’ 28 GHz licenses between December 2022 and May 2023. Today, mmWave is used primarily in hotspots. Airports, (NFL) stadiums, concert halls, and shopping malls are among the few environments where extreme user density makes the economics work. Put simply, mmWave makes sense where demand is concentrated — but not elsewhere.

Faced with these realities, the industry has shifted to incremental innovation. Research focuses on technologies that could extend coverage and improve capacity, especially for FWA. One such proposal is the Joint Phase Time Array (JPTA), a novel antenna and RF front-end design. In JPTA, each antenna element connects through a true time delay to the phase shifter. This architecture enables the creation of multiple beams in the frequency domain within a single time slot. The effect is to increase the uplink (UL) duty cycle, which in turn extends coverage.

Samsung Research reported that JPTA could extend the cell radius by up to 47%, which translates into more than doubling the coverage area compared to conventional mmWave phased arrays. The gains were even higher when combined with innovations in power amplifier design and refined beamforming codebooks. However, these results come with caveats. The supporting studies relied on simplified channel models and withheld disclosure of key parameters, raising questions about real-world applicability. Whether such dramatic improvements can be replicated in actual deployment scenarios remains an open research question.

Learning from Failure: Why FR3 Could Be Different—or Not

In the early discussions around 6G, terahertz (THz) frequencies were often part of the vision. Research programs produced several prototypes and high-profile demonstrations, including work by the Fraunhofer Heinrich-Hertz Institute in Berlin, Germany together with LG Electronics in South Korea. Nonetheless the skepticism using these frequencies for mobile broadband was eminent given the commercial struggles of FR2/mmWave. Industry leaders recognized that success in 6G would require spectrum that balanced feasibility with ambition. The lesson was clear: wider bandwidths are necessary, but coverage must remain acceptable. That shift in mindset brought attention to a new “sweet spot.” Informally labeled as “Frequency Range 3” (FR3) or “the upper mid-band” this new “sweet spot” spans 7.125 to 24.25 GHz, with most interest in the lower portion of that range.

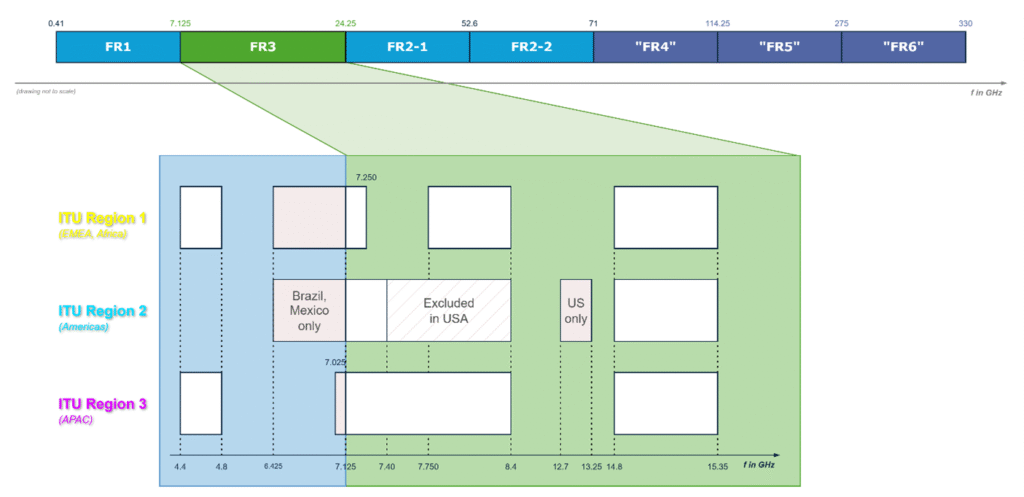

An early signal of regulatory support came in May 2023. The FCC released a Notice of Proposed Rulemaking (NPRM) for 12.7–13.25 GHz, explicitly considering the band for Beyond-5G and 6G services. Later that year, the World Radiocommunication Conference 2023 (WRC-23) convened in Dubai, UAE. More than 3,900 delegates from 163 member states endorsed resolutions to study new spectrum allocations. These would feed into future International Mobile Telecommunications (IMT) standards. Thus, the final report confirms studies for 4.4–4.8 GHz, 7.125–8.4 GHz, and 14.8–15.35 GHz in preparation for WRC-27. Notably, the final acts did not include any requirement to study spectrum above 100 GHz — a quiet but significant signal that the near-term 6G roadmap would stay below the terahertz frontier.

Figure 1 gives a comprehensive overview of the WRC-23 results with a breakdown for each of the three ITU regions in terms of considered IMT spectrum allocations. In addition, the resolution for the upper 6 GHz band (6.425 – 7.125 GHz) are visualized. We will break down these different allocations in the following paragraphs.

Over the course of the recent 15 months the upper part of the 6 GHz frequency band (6.425 to 7.125 GHz) was one of the most contested. The United States had dedicated this and the lower part of that band (5.925 to 6.425 GHz) to unlicensed use, aiming to strengthen the IEEE 802.11 family of standards. China took the opposite path, designating it for licensed mobile. Other countries, particularly in Europe, sought a compromise by splitting the band: the lower portion for unlicensed use, the upper for licensed. These decisions forced regulators worldwide to choose between the two economic superpowers’ models or craft a hybrid approach.

Zooming in on FR3, the 7 GHz band (7.125–8.4 GHz) is now under study in Region’s 2 and 3 (i.e., America’s and Asia Pacific). In Region 1 (Europe, Middle East, Africa), part of the range was carved out due to NATO use. The spectrum between 7.25 and 7.75 GHz is assigned to the Fixed Satellite Service (FSS) for downlink (DL) from space to earth.

Figure 1 also reflects developments in the United States that stem from domestic politics. The “One Big Beautiful Bill Act” (OBBBA), signed into law on July 4, 2025, was controversial in many respects, but it carried significant spectrum provisions. In the U.S., spectrum management is split: the National Telecommunications and Information Administration (NTIA) oversees federal use, and the FCC manages non-federal allocations. The law directed both agencies to identify and auction spectrum for commercial use between 3.1 and 10.5 GHz. However, some blocks were excluded, such as 3.1 to 3.45 GHz and 7.4 to 8.4 GHz.

This new bill sets concrete deadlines for identification and auction of additional spectrum. The commission must license not less than 300 MHz of spectrum and within two years auction at least 100 MHz between 3.98 and 4.2 GHz. The NTIA must identify 200 MHz within four years and another 300 MHz within eight years, for eventual reallocation to non-federal or shared use. In a recent testimony, the NTIA highlighted four priority ranges: 1.68 to 1.69 GHz, 2.7 to 2.9 GHz, 4.4 to 4.94 GHz, and 7.125 to 7.4 GHz. Based on these NTIA identifications, the FCC is required to auction 200 MHz within four years (2029) and the remaining 300 MHz within eight years (2034) by enacting the bill.

Another shift came in May 2025. The FCC announced that the “Upper 12 GHz band” (12.7–13.25 GHz) would be reconsidered for satellite communications, reflecting a lack of consensus identifying a best way forward to use it for terrestrial communication as indicated two years prior.

Together, these decisions reshaped the U.S. spectrum outlook for 6G. The potential pool for mobile spectrum dropped from a total available bandwidth of 2,375 MHz to 825 MHz — a reduction of roughly 65% in the FR3 range. Still, the OBBB preserved significant opportunities, calling for up to 800 MHz of spectrum bandwidth between 3.45–6.425 GHz and 7.125–10.5 GHz. The lower part of this range offers a clear cost advantage, especially for macro deployments.

Beyond Capacity Crunch Narratives – Why 6G Needs FR3?

The industry often defaults to ‘capacity crunch’ as the justification for new spectrum. That argument, while valid in the 4G LTE era—where video streaming dominated traffic—has become less absolute in the 5G era. Instead, the defining driver for 6G will be UL-heavy extended reality (XR). This may raise the question: didn’t 5G already promise immersive applications? In practice, early releases lacked technical maturity to fully support the services enabling the ‘Mobile Metaverse’. 3GPP addresses the identified shortcomings with Release 17 to 19 and the ongoing Release 20, but commercial networks still run on those early Release 15/16 versions. As a result, data rates and latencies are still inadequate for mass-scale adoption of any form of immersive services. The expectation is that through ongoing trials and stepwise upgrades the industry will further sharpen its understanding. But 6G will ultimately deliver these services at scale, as already anticipated in 3GPP’s Service and Systems Aspects working group 1 (SA1) initial 6G use case studies.

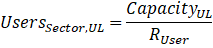

To illustrate why new spectrum is critical, consider the following baseline data rate calculation for an Augmented Reality (AR)-based service, which depends on the following parameters:

- Field-of-view (FoV)

- Pixels-per-degree (PPD)

- Resolution per eye

- Refresh rate (frames per second, fps)

- Color depth (bits per pixel)

- Compression scheme (e.g., H.265/HEVC).

As an example, we use a resolution of 1920×1080 per eye at 90 fps. Such a setup demands UL data rates of 55–80 Mbps per user, primarily to stream live video from AR glasses to the cloud. There, simultaneous localization and mapping (SLAM) and object recognition are performed before results are returned as lightweight overlays in DL. The scenario is asymmetrical and UL-heavy stress point for today’s networks optimized around DL traffic patterns. Picture a user wearing tethered AR glasses connected to a 6G smartphone, walking through a city promenade while information about shops, transit, or landmarks overlays in real time. The actual data rate fluctuates with scene complexity, encoder latency constraints, and dynamic adaptation of resolution and frame rate based on head movements. But the predicted baseline UL burden remains substantial.

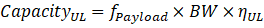

Using these rates (RUser), we can sketch a simplified capacity model for cell edge performance, as that is where it matters the most how the. The number of users served per sector is determined by payload factor (fPayload), carrier bandwidth (BW), and UL spectral efficiency (ηUL):

Payload factors account for signaling overhead and Time Division Duplex (TDD) DL-UL patterns, that are typically set to a 3:1 ratio in today’s 5G NR networks. Equally TDD and Frequency Division Duplex (FDD) suffer in same way payload factor smaller than 1, because of signaling overhead due to UL control information (UCI), mandatory Demodulation Reference Signals (DMRS) and the occasional transmission of Sounding Reference Signals (SRS). For spectral efficiency, we assume MU-MIMO in the UL with spatial multiplexing of users on the same physical resources.

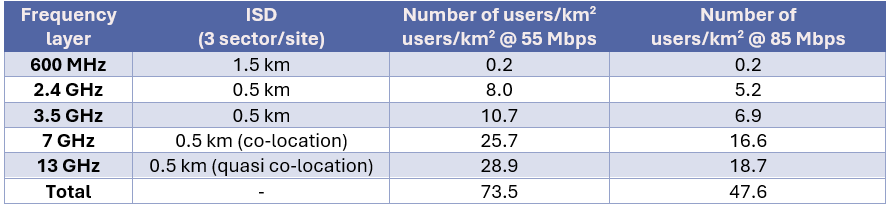

The International Telecommunication Union defines peak spectral efficiency targets for 5G’s enhanced Mobile Broadband (eMBB) use case in its ITU-R M.2410 requirements document as 30 bps/Hz in the downlink and 15 bps/Hz in the uplink — representing the ideal case under perfect link conditions. However, real networks must also satisfy minimum performance thresholds defined at the 5th percentile of normalized user throughput (i.e., cell-edge conditions). For the uplink, those minimums in dense urban and rural environments are 0.15 bps/Hz and 0.045 bps/Hz, respectively. These values serve as compliance guardrails in macro-deployment evaluations rather than performance targets. The following capacity model therefore applies modestly higher uplink spectral efficiency assumptions per frequency layer. Table 1 summarizes the parameters used for this high-level calculation.

For the network layout we assume an ideal hexagonal grid for each frequency layer and apply the base formula with

where ISD stands for Inter-Cell Site Distance. ISD defines the distance between two or more base stations within a network and is frequency dependent.

Table 2 shows the total number of users served in this deployment scenario based on pragmatic values.

The conclusion is straightforward: without additional FR3 spectrum and UL-optimized network designs, immersive AR-based services cannot scale.

Put simply: today’s spectrum portfolio cannot support XR users at scale. FR3 will fill that gap.

And this is not just a theoretical construction. The “high-end, personal AR assistant” use case outlined above sits on top of trends that Ericsson recently illustrated in two different publications. AR glasses will likely be tethered companions to next-generation smartphones with compute offloaded to the phone and to edge/cloud, where the number of users in this user group is expected to double in the next five years. At the same time, Ericsson’s latest Mobility Report shows that AI-assisted AR shifts traffic patterns toward the UL. Even at modest adoption with low-to-mid quality video this drives significant average UL growth up to 47% and 14% in DL direction, which confirms the need for explicit uplink improvements and demand of additional spectrum. Together, these findings confirm: the AR/VR trajectory is real, UL-heavy, and spectrum-hungry. 6G will require FR3 to make it viable otherwise it fails similar to 5G addressing the FWA use case simply using mmWave.

The FR3 Deployment Question: Co-Location as the Way Forward

The previous section showed that UL-heavy AR services cannot scale without FR3 spectrum at 7 and 13 GHz. That capacity model already assumed these carriers would be co-located with existing macro grids. That assumption is not just a modeling convenience — it reflects a business lesson the industry has drawn from FR2/mmWave. Non-co-located deployments drove deployment costs beyond viability. For FR3 to succeed, co-location with today’s 5G macro base stations is an absolute requirement from an operator perspective. It is the only way to contain capital expenditure while extending networks into the upper midband.

This requirement, however, introduces technical challenges that the standard must address from the start. 3GPP needs to make FR3 co-location part of the baseline in 3GPP’s Release 21, the first normative set of 6G specifications. The timeline for this release is still undecided but should not be decided later than June 2026. However, empirical patterns give us guidance. The Abstract Syntax Notification 1 (ASN.1) freeze, which marks the official end for any release by freezing the handshake protocols between network and mobile device, for Release 20—the final release covering only 5G Advanced—is scheduled for June 2027. At its 6G inauguration workshop in Incheon, South Korea in March 2025, 3GPP agreed not to rush feature work in new releases. It now allows up to 21 months for study item completion in both the Service & Systems Aspects (SA) and Radio Access Network (RAN) groups. This cadence implies that the first code freeze of 6G normative specifications, including FR3 support, can be expected around March 2029. That milestone will mark the official close of Release 21 and the point where co-location in FR3 becomes part of the global 6G foundation.

Coverage vs. Performance: Closing the FR3 Link Budget Gap

If FR3 spectrum is to be co-located with FR1 for macro deployments, its link budget must hold up against today’s most widely deployed 5G frequency bands. Globally, n77 (3.3–4.2 GHz) and n78 (3.3–3.8 GHz) serve as the benchmark. When compared to these midbands, moving to 7 GHz carrier frequency increases free-space path loss (FSPL) by 6 dB, and to 13 GHz by 11.4 dB. These losses scale with frequency squared and compound with building penetration. Penetration matters because empirically 70 to 80% of mobile traffic is generated indoors, with video streaming alone accounting for over 74% of global data traffic in 2024, expected to reach 82% at end of 2025.

To quantify the challenge, 3GPP defines two composite building models as no building is simply made from one material:

- Low-loss: 70% concrete + 30% plain glass — typical of older or simpler structures.

- High-loss: 70% IRR glass + 30% concrete — representing modern, energy-efficient office buildings.

These models capture more than material properties. They also include reflection, diffraction, and layering effects. When applying these models, they reveal a 17.8 (19) dB increase in losses between the low-loss and high-loss model for a carrier frequency of 7 (13) GHz. A recent study carried out by Nokia therefore argues to raise the Total Equivalent Isotropically Radiated Power (EIRP) for these bands from +75 dBm/100 MHz to +85 dBm/100 MHz as a starting point.

But an increase in transmitting power alone is insufficient to offset both FSPL and material penetration. Closing the link budget gap between 3.5 GHz and 7 (13) GHz requires a stack of technology components working together.

What else does the industry have in their toolbox to improve the link budget, extend UL coverage? Here are a few examples that are considered for enabling FR1 and FR3 co-location.

Waveform optimization. The underlying default waveform schemes for the 5G NR standard are CP-OFDM and DFT-spread-OFDM as an optional transmission scheme used in the uplink. This is unlikely to change drastically for 6G as at the recent 3GPP RAN1#122 meeting in Bengaluru, India it was agreed that both waveforms types serve as the basis for 6G in DL and UL direction. Any enhancements or modifications will be studied as potential additions. One of such enhancements might be the combination of DFT-spread-OFDM with Frequency Domain Spectral Shaping (FDSS). While one of CP-OFDM drawbacks is the high peak-to-average power ratio (PAPR), mandating a backoff factor to operate the power amplifier in its linear region, DFT-spread-OFDM had been introduced for the uplink to lower the PAPR but at the cost of making it modulation scheme and frequency allocation dependent. FDSS with spectral extension applied to DFT-spread-OFDM offers a practical path to further reduce PAPR. This will let mobile devices drive their power amplifier closer to saturation enabling higher maximum transmit power without violating RF emission limits, and thus directly improving coverage.

Enhanced duplexing method. Traditionally, we separate transmission in frequency or time known as FDD or TDD mode using separate frequencies for DL and UL transmission or allocating time slots for transmission or reception, including necessary guard intervals. 5G-Advanced in 3GPP Release 18 introduces Sub-Band Full Duplex (SBFD), which allows simultaneous UL and DL on separate sub-bands of the same carrier. This increases UL transmission opportunities without requiring paired spectrum. Link level simulations show an astonishing increase in UL coverage by 5.41 dB for FR1 Urban Macro (UMa) deployment scenarios, provided sufficient self-interference cancellation at the base station. Extending SBFD principles to FR3 is not optional—it may be the only way to unlock UL-heavy use cases on macro grids.

Machine Learning (ML)-based signal processing. Conventional receivers already work remarkably well. The algorithms powering today’s architecture are the reason smartphones and networks deliver consistent performance under highly variable conditions. But the industry is now chasing the last few percentage points of efficiency and robustness that conventional methods struggle to achieve.

Replacing separate signal processing blocks with trained ML models has therefore gained momentum since first concepts appeared in 2017. Since then, several proof-of-concepts have been showcased at major industry events. Nokia’s DeepRx, a fully convolutional deep learning receiver, validated the concept in both simulations and realistic deployment studies. Nvidia Research, in collaboration with Rohde&Schwarz, demonstrated how ML could replace channel estimation and equalization utilizing a hardware-in-the-loop approach with a standard-compliant receiver chain. This approach bridged the gap between simulation results and the constraints of real hardware, showing that neural receivers can move beyond theory into practical implementation. And most recently, DeepSig, together with Viettel High Tech, reported the first live 5G trial of a neural receiver in a commercial network cluster.

At the heart of these innovations lies the neural receiver, which integrates channel estimation, equalization, and demapping into a single model trained offline but applied in real time. The model leverages both pilot and data-aided estimation, and early studies have shown improvements of up to 2.2 dB compared to state-of-the-art conventional algorithms. While these gains may appear only incremental, they directly improve link margin. And when combined with techniques like digital post-distortion, an actual subject of ongoing applied research, gains could be even more substantial.

In conclusion, increasing EIRP through regulation will help. But power alone cannot offset FSPL and penetration losses. Closing the FR3 link budget demands waveform shaping, advanced duplexing, and ML-based receivers—optimized not in isolation, but as a system.

Is FR3 the New FR2? A Careful Yes, a Strong No, and a Cautious Maybe!

The story of FR2 is a cautionary tale. It shows how technical ambition, regulatory enthusiasm, and commercial hype can collide with physics and economics. FR3 offers a second chance. It can provide the spectrum 6G needs for immersive services while avoiding FR2’s pitfalls. Whether it becomes the backbone of 6G or the next disappointment depends not on physics alone, but on how the industry applies the lessons learned.

Operators are already testing FR3 in the field, such as SoftBank’s 7 GHz trial with Nokia. While details are scarce beyond mentions of Massive MIMO, these trials will be critical in showing whether FR3 can deliver macro-scale performance that matches or surpasses the 3.5 GHz baseline.

The conclusion is nuanced. FR3 is not FR2. But it could become FR2 if the industry repeats the same mistakes. The answer to the headline question is this: FR3 will not succeed because regulators declare it so or because vendors hype it. It will succeed only if physics, economics, and deployment realities are addressed together from day one. Otherwise, it risks becoming 5G’s mmWave déjà vu.

Leave a Reply